Dalberg uses cookies and related technologies to improve the way the site functions. A cookie is a text file that is stored on your device. We use these text files for functionality such as to analyze our traffic or to personalize content. You can easily control how we use cookies on your device by adjusting the settings below, and you may also change those settings at any time by visiting our privacy policy page.

Artificial intelligence is critical to accelerating economic development globally. Yet its resource-intensive nature attaches a substantial cost to growth, and across the world, growing AI infrastructure is levying a price on the health and financial well-being of communities.

In Mexico, water-hungry data centers are proliferating in drought-ridden regions. In the US, AI’s soaring energy consumption has raised public concerns about rising electricity bills. The reaction of people, coupled with energy shortages, has prompted data center developers to turn to aircraft engines and diesel generators for on-site power. Consider this: a single data centre consumes as much electricity as 100,000 households, assuming that a large DC typically requires 100 MW of capacity and 280 million litres of water per year. In Ireland, around 22% of the country’s electricity consumption was by data centres in 2024. The public, aggravated by high power bills and fossil fuel being deployed to keep electricity supply stable, are resisting the development of new centers. In India, data center energy consumption is comparatively lower–under 1% of total electricity in 2024. Even so, the growth of data centers is concerning as they are coming up in urban and industrial areas where land and water are in short supply.

Judging by these instances, it would seem that AI and the world’s climate goals run counter to each other. However, this narrative deserves to be challenged. If AI infrastructure is made resilient, communities will be unburdened and the potential of AI to achieve climate goals can be effectively leveraged.

Use cases, public resources, and clean energy need to be considered while building AI

This was the thrust of the discussions that took place at the Resilience, Innovation and Efficiency Working Group, an event that took place before the India AI Impact Summit 2026, in which Dalberg participated. The output of the deliberation is the Dalberg-authored Advancing Resilient AI Infrastructure Playbook, which charts the pathway to realise this ambition.

There are two major factors inhibiting the development of resilient AI. There’s a shortage of clean power. Globally, fossil fuels continue to provide up to 50% of electricity for data centres, which are expanding faster than clean energy infrastructure. And data centers have, so far, been deliberately situated in areas that have reliable power and network connectivity and are proximate to end users. These areas tend to be urban hubs that are already taxed with the high demand for water. Globally, 43% of data centres function in places where water supply is stretched.

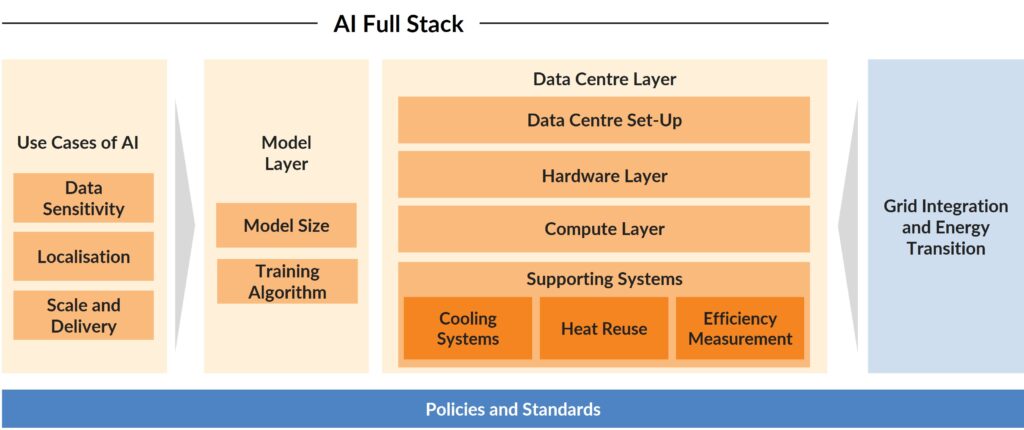

As a solution, the Playbook proposes a systems approach that rallies stakeholders to coordinate investments rather than making individual efforts. Why is this relevant? Because microchips and data centre infrastructure are not the sum of AI development. It involves resources in which people have a stake such as land, water and capital. In other words, this means making efficient design and deployment choices across the AI stack, including:

- AI use cases: Hitching AI development to national socio-economic priorities and planning local infrastructure needs accordingly .

- Model layer: Defining service-level requirements such that appropriate model and infrastructure choices can be made; building fit-for-service models; developing edge computing to reduce data traffic to the cloud; and using smart training techniques to reduce the compute intensity of models.

- Data center layer: Situating data centers in regions abundant in clean energy and water; constructing centers that are low-carbon by design; using energy-efficient accelerators for compute; and designing efficient cooling systems, especially ones that make judicious use of water, among other measures.

- Grid integration and energy transition layer: Sourcing power from cleaner energy sources and smartly integrating with existing grid systems.

- Guiding principles for policies and standards: Devising a national AI strategy that steers full stack development while being in tune with national priorities and the availability of resources. This involves laying down standardized benchmarks to measure, compare and improve the energy and water consumption, and emissions footprints of AI infrastructure, and rewarding data centers that meet the norms. The idea is to reduce the burden on local resources while encouraging efficient use of resources.

The stack approach assumes greater significance in low- and middle-income countries (LMIC). AI infrastructure has so far been concentrated in developed countries. However, subsequent waves of development will take place in LMICs, where concerns such as the availability of water resources and last mile electrification, need to be taken into account, and AI-driven growth needs to be weighed against energy transition goals.

There are enough uses cases to back development across the AI stack

There’s evidence that emerging economies can leapfrog the pains of AI development. For example, fit-for-purpose, small AI models optimized for specific tasks work well when connectivity is low and cost is a concern. Take Agrosavia, the Colombian Agricultural Research Corporation, that has developed AI-enabled irrigation tools for coffee farms. These channel predictive models and local agronomic data to optimize irrigation timing and volumes in response to micro-climatic conditions. Designed and calibrated for coffee cultivation, the tools preclude the need for heavy compute and energy-intensive analytics.

For use cases that require local data processing, such as AI-powered robotics or decision systems supporting transport, energy and public safety, AI models can be designed to run on end-user devices such as smartphones and laptops instead of transmitting data to the cloud for computation. Edge AI is particularly useful in areas where resources and connectivity are constrained. Take PlantVillage’s Nuru app, which embeds edge AI directly onto the mobile phones of Kenyan farmers, enabling them to diagnose crop pest and disease from images of leaves, even offline. The app uses machine learning to recognise symptoms and delivers actionable advice.

Much of the criticism of data centres is around their immense consumption of electricity generated by fossil fuels. A way to mitigate their carbon footprint is to co-locate them with renewable energy generation sites such as solar and wind farms. A case in point is Moro Hub, a subsidiary of the digital arm of the Dubai Electricity and Water Authority (DEWA), which has situated its green data centre at the Mohammed bin Rashid Al Maktoum Solar Park, thus powering the data centre entirely by renewable energy.

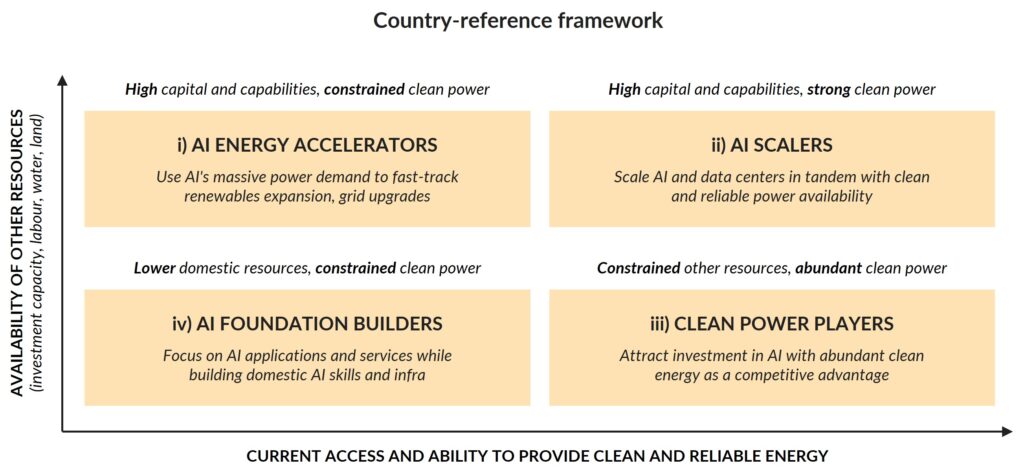

A country-level assessment of challenges and capabilities is a first step

Before solutions are devised and launched into practice, it’s imperative to recognize that the deployment of AI is determined, more than just by technological capability, by a country’s existing resource and energy challenges. What are the conditions that will make AI viable and scalable?

The Playbook puts forth a Country Reference Framework aimed at aligning AI ambitions with resources and development priorities. It outlines four country archetypes:

- AI-energy accelerators: Countries that have ambitious AI goals but limitations in grid capacity or renewable energy. Consequently, AI infrastructure must scale within tight boundaries. However, AI accelerators could use the scarcity to incentivize clean energy investment and grid upgrades. In other words, make a strong business case for efficient AI deployment.

- AI scalers: Countries with robust conditions to spur the development of resilient AI, making them candidates for becoming global AI hubs and centers for innovation for resilience.

- Clean power players: States rich with renewable energy but facing challenges related to capital, labour, land and institutional capacity. Clean power players can strategically leverage their abundance in the global AI market by hosting large data centers, perhaps even become regional hubs, while ensuring that these provide benefits such as the creation of local jobs.

- AI foundation builders: Countries that face challenges across parameters from clean energy to capital. These states can leverage the technology by adopting AI-enabled services to advance existing development priorities. Without pressuring systems, they can build the skills and institutions needed for future AI developments.

India can be an example for low- and middle-income countries

India has already begun translating its AI ambitions into practice. The country is actively procuring GPUs for AI infrastructure, the India AI Mission has commissioned startups to develop indigenous large language models and established AI Kosh, a resource for local datasets. How do we channel this early momentum to build AI that’s resilient?

A step in this direction is to devise guidelines on how to build resilient AI infrastructure while expanding clean energy supply, gearing our approach towards LMICs. And it might be worth mining communities for insight while determining the approach and developing a deployment framework that leaves communities net-beneficiaries from AI infrastructure deployment.

Cross-sector collaboration is also key. One of the reasons that AI development and climate resilience are considered adversaries is that they are seen to belong to different sectors, each governed by its respective ministry. The conflict melts away once parties across the board come together. Take, for instance, Bharat Climate Forum, a platform that ropes in policy, industry, finance and research actors to advance indigenous cleantech manufacturing.

AI has the capacity to positively transform economies and improve lives, which is why it’s so crucial to understand ground conditions and existing solutions while making decisions. And to keep people and the environment at the center of policy-making. The enthusiasm around AI was palpable at the India AI Impact Summit 2026 this past February. It’s time to gather that impetus to drive the country’s march to resilient development.